MemoryArena: Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

Benchmarking Agentic Memory

- Current LLM benchmarks evaluate memorization and action in isolation, failing to reflect how agents use memory to guide future decisions in real-world scenarios.

- MEMORYARENA is a new unified evaluation gym designed to test agents in multi-session 'Memory-Agent-Environment' loops.

- The framework uses human-crafted, interdependent subtasks that require agents to distill past experiences into memory for later use.

- Initial results show a significant performance gap, as models that excel at standard memory recall benchmarks struggle with memory-driven decision-making.

In realistic settings, memorization and action are tightly coupled: agents acquire memory while interacting with the environment, and subsequently rely on that memory to solve future tasks.

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

Zexue He* 1Yu Wang* 2Churan Zhi* 2Yuanzhe Hu* 2Tzu-Ping Chen* 2Lang Yin* 3Ze Chen4

Tong Arthur Wu5Siru Ouyang3Zihan Wang6Jiaxin Pei1Julian McAuley2Yejin Choi1Alex Pentland1

Abstract

Existing evaluations of agents with memory typ-

ically assessmemorizationandactionin isola-

tion. One class of benchmarks evaluates memo-

rization by testing recall of past conversations or

text but fails to capture how memory is used to

guide future decisions. Another class focuses on

agent acting in single-session tasks without the

need for long-term memory. However, in realis-

tic settings, memorization and action are tightly

coupled: agents acquire memory while interact-

ing with the environment, and subsequently rely

on that memory to solve future tasks. To capture

this setting, We introduce MEMORYARENA, a uni-

fied evaluation gym for benchmarking agent mem-

ory inmulti-session Memory-Agent-Environment

loops. The benchmark consists of human-crafted

agentic tasks with explicitly interdependent sub-

tasks, where agents must learn from earlier

actions and feedback by distilling experiences

into memory, and subsequently use that mem-

ory to guide later actions to solve the overall

task. MEMORYARENAsupports evaluation across

web navigation, preference-constrained planning,

progressive information searching, and sequen-

tial formal reasoning, and reveals that agents

with near-saturated performance on existing long-

context memory benchmarks like LoCoMo per-

form poorly in our agentic setting, exposing a

gap in current evaluations for agents with mem-

ory. MEMORYARENAis released at https:

//memoryarena.github.io/.

1. Introduction

Large language model (LLM) agents have two complemen-

tary core capabilities: the ability to memorize task-relevant

knowledge over time (memorization) and the ability to act

*Equal contribution1Stanford University2UCSD3UIUC

4Princeton University5University of Pittsburgh62077AI. Corre-

spondence to: Zexue He<zexueh@stanford.edu>.

Preprint. February 19, 2026.

RetrievedMem. Subtask Inst. _LLM AgentEnvironmentMemorySession DMemory systemOrganizingUpdating

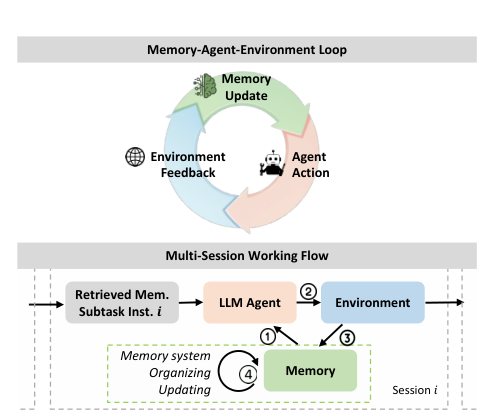

Memory-Agent-Environment Loop

Multi-Session Working Flow

Agent ActionEnvironmentFeedbackMemoryUpdate

Figure 1.MEMORYARENAEvaluates agents with Memory with

multi-session tasks in a Memory-Agent-Environment Loop.

through interaction with an environment (action) (Hu et al.,

2025b). However, existing evaluations of LLM agents with

memory typically isolate and assess only one aspect. The

first class of benchmarks focuses on evaluatingmemoriza-

tionthrough recall or retrieval over static long-context inputs

in question answering or summarization settings (Wu et al.,

2025; Zhong et al., 2024; Maharana et al., 2024; Hu et al.,

2025b), including benchmarks such as LoCoMo (Maharana

et al., 2024) and LongMemEval (Wu et al., 2025). In these

setups, agents are required to memorize provided conver-

sations or text chunks, and are evaluated on whether they

can recall specific information through downstream QA

tasks. However, despite being effective at measuring factual

recall, such benchmarks do not involve agentic decision-

making, environment dynamics, or action-dependent con-

sequences. As a result, although contemporary memory

systems achieve near-saturated performance on these bench-

marks, it remains unclear whether such gains meaningfully

translate to improved performance for LLM agents operat-

ing in goal-driven, interactive settings.

In contrast, the second class of benchmarks (Yao et al.,

2022; Zhou et al.; Deng et al., 2023), such as SWE-

Bench (Jimenez et al., 2023) and WebArena (Zhou et al.),

primarily evaluateactionby placing agents in dynamic en-

vironments, but are typically confined to a single session.

1arXiv:2602.16313v1 [cs.CL] 18 Feb 2026

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

Memory-Agent-Env. Loops Task Settings

BenchmarkMemory

Eval.Agentic

ActionsEnv.

Benchmarking Agent Memory Loops

- Existing AI benchmarks often treat interaction history as a flat context, failing to evaluate memory beyond short-term context windows.

- The researchers propose a Memory-Agent-Environment loop where memorization and action are treated as inseparable components of behavior.

- The MEMORYARENA gym introduces interdependent subtasks where success requires tracking latent constraints across multiple sessions.

- New evaluation tasks in MEMORYARENA are intentionally underspecified to ensure agents must recall information from previous interactions to succeed.

We argue that agent memory should be evaluated by treating memorization and action as inseparable components of agentic behavior.

n, interactive settings.

In contrast, the second class of benchmarks (Yao et al.,

2022; Zhou et al.; Deng et al., 2023), such as SWE-

Bench (Jimenez et al., 2023) and WebArena (Zhou et al.),

primarily evaluateactionby placing agents in dynamic en-

vironments, but are typically confined to a single session.

1arXiv:2602.16313v1 [cs.CL] 18 Feb 2026

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

Memory-Agent-Env. Loops Task Settings

BenchmarkMemory

Eval.Agentic

ActionsEnv.

FeedbackMulti-Sess.

TasksInterdep.

ST# T

(# Q)# Interdep.

ST#S

LOCOMO (Maharana et al., 2024)✓ ✗ ✗ ✓ ✗7512 1

N/A1 LongMemEval (Wu et al., 2025)✓ ✗ ✗ ✓ ✗500 1

MemoryAgentBench (Hu et al., 2025b)✓ ✗ ✗ ✓ ✗2k 1

MemoryBench (Ai et al., 2025)✓ ✗ ✗ ✓ ✗778 1

WebArena (Zhou et al.)✗ ✓ ✓ ✗ ✗812 1 13.3

WebShop(Yao et al., 2022)✗ ✓ ✓ ✗ ✗200 1 7.3

VeriGUI (Liu et al., 2025)✗ ✓ ✓ ✓ ✗130 4.5 214

Evo-Memory (Wei et al., 2025b)✓ ✓ ✓ ✓ ✗N/A2N/A2N/A2

AgencyBench (Li et al., 2026a)✗ ✓ ✓ ✓ ✓1384.31390

MEMORYARENA✓ ✓ ✓ ✓ ✓766 6.9 57

Table 1.We compare benchmarks along key dimensions: if the benchmark evaluates different memory mechanism, if it evaluates agent

actions, and if it involves environment feedbacks in memory–agent–environment loops. We also compare their evaluation task settings

and scales. (Notations:T.: tasks;ST.: subtasks;Env.: environment;Interdep.: interdependent;S.: Steps,Q: Queries). Green checkmarks

indicate supported features; red crosses indicate unsupported features.Note 1:These benchmarks use long-context conversational QA

tasks without agentic actions; thus, the number of action steps is Not Applicable (N/A).Note 2:Evo-Memory constructs a multi-session

setting by executingindependenttasks from existing single-session agent benchmarks sequentially. Because these tasks aredirectly

reused, there is no explicit subtask-level dependency or cross-session causal structure enforced. So the number of tasks, interdependent

subtasks, and per-task action steps cannot be meaningfully defined or aggregated. We marked them as N/A.Note 3:Computed from the

official AgencyBench-v2 release.

In these settings, the previous interaction history is treated

as flat context whenever it fits within the model’s context

window, so information beyond short-term working memory

is not causally required. However, in practical tasks, early

interactions often introduce latent constraints, including

compatibility requirements, shared preferences, and inter-

mediate reasoning outcomes, that are not explicitly restated

by the environment yet must be preserved and applied in

subsequent decisions. As a result, success in these bench-

marks does not reliably reflect an agent’s ability to retain

and utilize information over extended horizons.

We argue that agent memory should be evaluated by treat-

ing memorization and action as inseparable components of

agentic behavior. This requires assessing memory within a

full interaction process, in which actions elicit environment

feedback, feedback updates memory, and memory in turn

conditions subsequent action selection across multi-session

task execution. We refer to this process as aMemory-Agent-

Environment loop, which unfolds over multiple episodes or

sessions. In such settings, task success critically depends on

an agent’s ability to retain and correctly reuse information

acquired in earlier interactions.

To this end, we introduce MEMORYARENA, a unified evalua-

tion gym for benchmarking the usefulness of agent memory

usingmulti-session,interdependent agentic tasks. MEM-

ORYARENAconsists of human-crafted tasks with interdepen-

dent subtasks, where later actions are underspecified unless

agents correctly track task-relevant information from prior

sessions. We instantiate MEMORYARENAacross four do-

mains, including(1) bundled web shopping,(2) preference-constrained group travel planning,(3) progressive informa-

tion searching, and(4) sequential formal reasoningover

math and physical problems.

MEMORYARENA: Benchmarking Agent Memory

- MEMORYARENA is a new benchmark featuring human-crafted, multi-session tasks that require agents to track and apply information across interdependent subtasks.

- The framework spans four diverse domains, including web shopping, travel planning, information search, and sequential formal reasoning with average traces exceeding 40k tokens.

- While previous benchmarks focused on static retrieval or post hoc recall, MEMORYARENA evaluates whether agents can persistently store and actively utilize memory during execution.

- Experimental results reveal that current state-of-the-art agents fail to maintain latent task states effectively, leading to low completion rates in complex environments.

Despite their strong performance on existing memory benchmarks, these agents exhibit low task completion rates in MEMORYARENA, revealing persistent difficulties in maintaining and exploiting latent task state across sessions.

ss of agent memory

usingmulti-session,interdependent agentic tasks. MEM-

ORYARENAconsists of human-crafted tasks with interdepen-

dent subtasks, where later actions are underspecified unless

agents correctly track task-relevant information from prior

sessions. We instantiate MEMORYARENAacross four do-

mains, including(1) bundled web shopping,(2) preference-constrained group travel planning,(3) progressive informa-

tion searching, and(4) sequential formal reasoningover

math and physical problems. Each task spans long horizons

(with an average of 57 action steps) and produces extended

reasoning traces with more than 40k tokens. Table 1 com-

pares MEMORYARENAwith existing memory and agent

benchmarks along key dimensions.

MEMORYARENAevaluates various classes of state-of-the-

art agents, including long-context agents, agents augmented

with retrieval-augmented generation (RAG) systems, and

agents coupled with external memory systems, under a uni-

fied setting. Despite their strong performance on existing

memory benchmarks, these agents exhibit low task comple-

tion rates in MEMORYARENA, revealing persistent difficul-

ties in maintaining and exploiting latent task state across

sessions. This gap shows that success on current bench-

marks does not translate to effective memory use for guid-

ing future actions in agentic settings, underscoring the need

for more rigorous evaluation of long-horizon, multi-session

agent memory.

2. Related Works

Evaluation Focusing on Memory.Prior work evaluates

LLM memorization primarily through long context under-

standing and recall oriented benchmarks. Early stress test

evaluations such as Needle in a Haystack1probe a model’s

ability to retrieve salient information embedded within ex-

tended contexts. Subsequent benchmarks including Long-

1https://www.anthropic.com/news/claude-3-family

2

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

Bench (Bai et al., 2024), L-Eval (An et al., 2024), RULER

(Hsieh et al., 2024), and ∞-Bench (Zhang et al., 2024)

systematize this retrieval based evaluation through ques-

tion answering, summarization, and synthetic retrieval tasks.

More recent efforts extend long context evaluation to con-

versational or episodic settings. LoCoMo (Maharana et al.,

2024), LongMemEval (Wu et al., 2025), MemoryAgent-

Bench (Ai et al., 2025), MemoryBench (Ai et al., 2025),

and EvoMem (Wei et al., 2025b) assess whether models

can retain and recall information introduced in the previous

interactions. However, these benchmarks primarily evaluate

static memorization through post hoc recall using a single

query and do not involve an agentic or interactive environ-

ment in which memory must be actively used. In contrast,

MEMORYARENAfocuses on LLM agents equipped with

explicit memorization mechanisms and evaluates memory

usage in sequential multi session agentic settings. Our eval-

uation emphasizes whether information acquired during

earlier interactions can be persistently stored and correctly

utilized to support later task execution, reflecting more real-

istic long-term agent behavior.

Evaluation Focusing on Agentic Abilities.A comple-

mentary line of work evaluates LLM agents through in-

teractive execution benchmarks that emphasize model rea-

soning, action selection, and tool use in dynamic envi-

ronments. Web-based agent environments such as Web-

Shop (Yao et al., 2022), Mind2Web (Deng et al., 2023),

and Mind2Web 2 (Gou et al., 2025) assess an agent’s abil-

ity to navigate web interfaces, invoke tools, and execute

grounded actions in response to web transitions. Coding

environments, such as SWE-bench (Jimenez et al., 2023),

focus on software engineering tasks that require iterative

reasoning and tool-mediated code edits to resolve isolated is-

sues. More recent compositional search benchmarks such as

BrowseComp (Wei et al., 2025a) and BrowseComp+ (Chen

et al., 2025) evaluate agents’ capacity for deep research.

MemoryGym (Pleines et al.

Evaluating Agent Persistent Memory

- Existing AI benchmarks primarily evaluate agents on single-session tasks, failing to measure the role of persistent memory across multiple episodes.

- Current memory benchmarks often focus on static retrieval or question-answering rather than the active application of skills in interdependent task sequences.

- MEMORYARENA introduces cross-task causal dependencies, requiring agents to absorb experiences and apply new understandings to future decisions.

- The benchmark covers diverse domains including web shopping, travel planning, and formal reasoning in mathematics and physics.

- By shifting from fact recall to sequential task completion, researchers aim to see if agents can truly learn from past actions to improve future performance.

MEMORYARENA is the first one designed to assess agent memory using sequential subtasks with causal dependencies across sessions.

gent’s abil-

ity to navigate web interfaces, invoke tools, and execute

grounded actions in response to web transitions. Coding

environments, such as SWE-bench (Jimenez et al., 2023),

focus on software engineering tasks that require iterative

reasoning and tool-mediated code edits to resolve isolated is-

sues. More recent compositional search benchmarks such as

BrowseComp (Wei et al., 2025a) and BrowseComp+ (Chen

et al., 2025) evaluate agents’ capacity for deep research.

MemoryGym (Pleines et al., 2025) measures within-episode

retention in a partially observable control 2D environment.

While these benchmarks provide valuable testbeds for eval-

uating agent execution and reasoning, they are typically

formulated as single-session, independent tasks and do not

require persistent memory across episodes. As a result, the

role of agent memory is not explicitly evaluated. Recent

work (Zhong et al., 2024; Wei et al., 2025b) feeds agentic

tasks from above benchmarks in a streaming manner to en-

able test-time learning. However, unlike our setting, these

evaluations do not enforce explicit dependencies across in-

dividual tasks. MEMORYARENAis the first one designed to

assess agent memory using sequential subtasks with causal

dependencies across sessions.

Several recent benchmarks highlight the gap between infor-

mation recall from long conversation history and agentic

deployment, but still most evaluate memory via question#min ST

(or Sess.)#max ST

(or Sess.)# avg T.

Trace L# T (Groups

of Subtasks)

Bundled Web Shopping 6 6 41.5k 150

Included domain[Grocery, Beauty, Electronics, Home Decor, Baking]

Group Travel Planning 5 9 40.6k 270

Progressive Web Search 2 16 122.4k 256

Math Formal Reasoning 2 16 18.1k 40

Included Domains [Pure math, Optimization, Learning theory]

Phys. Formal Reasoning 2 12 14.1k 20

Included Domains[High energy theory, High energy phenomenology,

High energy lattice, Condensed matter theory]

Table 2.Benchmark Statistics in MEMORYARENA.

answering or tool grounding over a fixed history

Mem2ActBench (Shen et al., 2026), MemTrack (Desh-

pande et al., 2025), EMemBench (Li et al., 2026b), and

AgentLongBench (Fang et al., 2026) construct long tool-

call traces or enterprise-style workflow timelines and test

whether agents can retrieve the correct facts or parameters

to answer/complete post-hoc follow-up queries. They focus

on retrieval from static reasoning traces rather than interde-

pendent task sequences where distilled skills can influence

future execution (e.g., learning from inductive problems in

formal reasoning in MEMORYARENA). AgencyBench (Li

et al., 2026a) and Beyond Task Completion (Akshathala

et al., 2025) incorporate memory into agent execution, but

use simple fixed add-and-retrieve tools, prioritizing over-

all agent capability over systematic evaluation on memory

mechanisms. In contrast, MEMORYARENAenforcescross-

task causal dependenceand evaluates memory through

end-to-end sequential task completion, measuring if agents

can absorb experiences, acquire new skills, distill reusable

knowledge from the past and eventually apply the new skill

and understandings to inform future decisions rather than

merely recalling previously seen facts.

3. MEMORYARENA: Agent Memory in

Memory-Action-Environment Loops

3.1. Task Composition and Data Preparation

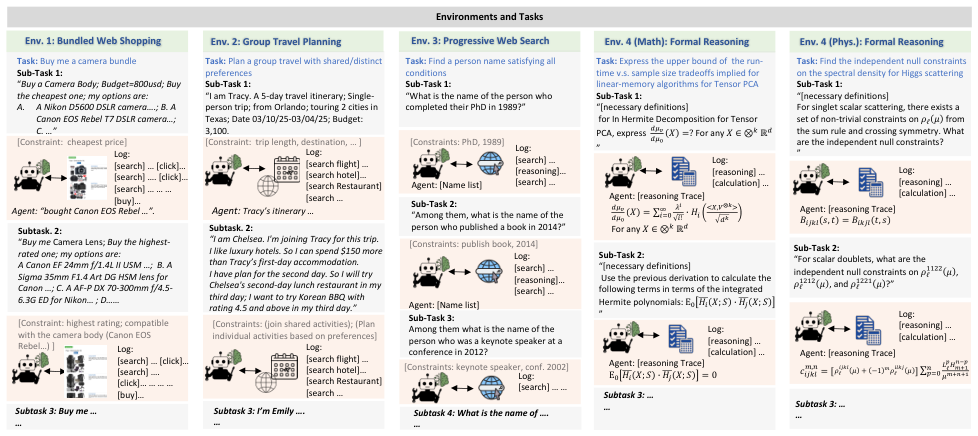

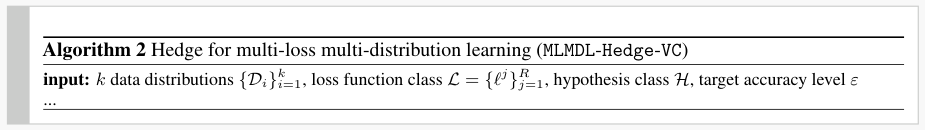

Web Navigation: Bundled Web Shopping.The Bundled

Web Shopping environment models real-world shopping

scenarios in which users purchase related products over

time rather than in a single transaction. Later purchases

depend on recalling attributes of earlier items to ensure

compatibility and preference consistency. We construct

the Bundled Web Shopping environment by extending the

shopping environment of (Yao et al., 2022), which contains

tens of thousands of products with detailed descriptions

and hierarchical category annotations.

Interdependent Agent Task Environments

- The Bundled Web Shopping environment simulates realistic scenarios where users make sequential, related purchases requiring memory of previous choices.

- Product compatibility serves as a primary constraint, such as ensuring a specific camera lens matches a previously selected camera body.

- Group Travel Planning tests an agent's ability to coordinate shared and individual preferences across multiple trip participants and travel days.

- Progressive Web Search tasks require agents to refine candidate lists by applying new constraints to information retrieved in earlier sessions.

- Formal reasoning environments in math and physics challenge agents to utilize previous derivations to solve complex, multi-step theoretical problems.

Later purchases depend on recalling attributes of earlier items to ensure compatibility and preference consistency.

.The Bundled

Web Shopping environment models real-world shopping

scenarios in which users purchase related products over

time rather than in a single transaction. Later purchases

depend on recalling attributes of earlier items to ensure

compatibility and preference consistency. We construct

the Bundled Web Shopping environment by extending the

shopping environment of (Yao et al., 2022), which contains

tens of thousands of products with detailed descriptions

and hierarchical category annotations. To reduce long-tail

noise, we restrict our data to products from the five largest

domains: Electronics, Home Decor, Baking, Beauty and Per-

sonal Care, and Grocery. Leveraging the category hierarchy,

we first identify candidate groups of potentially compatible

products by clustering items that share the same category

3

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

Env

.

4 (Phys.): Formal Reasoning

Env

. 1: Bundled Web ShoppingTask: Buy me a camera bundleSub-Task 1:“Buy a Camera Body; Budget=800usd; Buy the cheapest one; my options are: A.A Nikon D5600 DSLR camera….; B. A Canon EOS Rebel T7 DSLR camera…; C. …” [Constraint: cheapest price]Subtask. 2:“Buy me Camera Lens; Buy the highest-rated one; my options are:A Canon EF 24mm f/1.4L II USM …; B. A Sigma 35mm F1.4 Art DG HSM lens for Canon …; C. A AF-P DX 70-300mm f/4.5-6.3G ED for Nikon…; D……[Constraint: highest rating; compatible with the camera body (Canon EOS Rebel…) ]Log: [search] …[click]…[search] …. [click]…[search] ………[buy]…Agent: “bought Canon EOS Rebel …”.

Log: [search] …[click]…[search] …. [click]…………[buy]…Subtask 3: Buy me ……Task: Plan a group travel with shared/distinct preferencesSub-Task 1:“I am Tracy. A 5-day travel itinerary; Single-person trip; from Orlando; touring 2 cities in Texas; Date 03/10/25-03/04/25; Budget: 3,100.[Constraint: trip length, destination, …]Subtask. 2:“I am Chelsea. I'm joining Tracy for this trip.I like luxury hotels. So I can spend $150 more than Tracy’s first-day accommodation. I have plan for the second day. So I will try Chelsea's second-day lunch restaurant in my third day; I want to try Korean BBQ with rating 4.5 and above in my third day.”Log: [search flight] …[search hotel]…[search Restaurant] [search …Agent: Tracy’s itinerary …

Subtask 3: I’m Emily ….…Log: [search flight] …[search hotel]…[search Restaurant] [search …

Env

.

4 (Math): Formal Reasoning

Env

.

3

:

Progressive

Web

SearchTask: Find a person name satisfying allconditionsSub-Task 1:“What is the name of the person who completed their PhD in 1989?”[Constraints: PhD, 1989]Sub-Task 2:“Among them, whatis the name of the person who published a book in 2014?”[Constraints: publish book, 2014]

Log: [search] …[reasoning]…[search] …

Log: [search] …[reasoning]…[search] …Task: Express the upper bound of the run-time v.s. sample size tradeoffs implied for linear-memory algorithms for Tensor PCASub-Task 1:“[necessary definitions]for In HermiteDecomposition for Tensor PCA, express !"#!"$%=?For any %∈ ⊗+ℝ!”Log: [reasoning] …[calculation] …

Agent: [Name list]

Agent: [Name list]Agent: [reasoning Trace] !"#!"$%=∑./0! �34056,8⨂:;!:�<0=>For any %∈ ⊗+ℝ!Sub-Task 2:“[necessary definitions]Use the previous derivation to calculate the following terms in terms of the integrated Hermitepolynomials:Ε>40%;A34B%;A ”Log: [reasoning] …[calculation] …

Agent: [reasoning Trace] Ε>40%;A34B%;A=0 Subtask 3: ……

Env

. 2: Group T

ravel Planning

[Constraints: (join shared activities); (Plan individual activities based on preferences]

Log: [reasoning] …[calculation] …

Log: [search] ……Subtask 4: What is the name of ….…Environments and TasksTask:Find the independent null constraints on the spectral density for Higgs scatteringSub-Task 1:“[necessary definitions]For singlet scalar scattering, there exists a set of non-trivial constraints on GℓIfrom the sum rule and crossing symmetry. What are the independent null constraints?

Designing Memory-Augmented Agent Tasks

- MEMORYARENA evaluates agents using interdependent subtasks that require the retention and integration of information across multiple sessions.

- The bundled shopping task uses coarse compatibility trees and fine-grained 'accept-reject' maps to test reasoning about product relationships.

- To ensure a unique solution, human annotators create multi-session instructions featuring incompatible distractors and specific selection constraints.

- Progressive web search tasks are designed to force agents to incrementally add constraints, preventing them from solving the problem in a single interaction.

- The evaluation framework deliberately filters out trivial tasks that do not demand long-term memory or cross-session information reuse.

Solving each session requires the agent to recall prior purchases, identify compatibility constraints, discard negative options, and select a valid product.

ravel Planning

[Constraints: (join shared activities); (Plan individual activities based on preferences]

Log: [reasoning] …[calculation] …

Log: [search] ……Subtask 4: What is the name of ….…Environments and TasksTask:Find the independent null constraints on the spectral density for Higgs scatteringSub-Task 1:“[necessary definitions]For singlet scalar scattering, there exists a set of non-trivial constraints on GℓIfrom the sum rule and crossing symmetry. What are the independent null constraints?”Log: [reasoning] …[calculation] …Agent: [reasoning Trace]J0B+KL,M=J0+BKM,LSub-Task 2:“For scalardoublets,what are the independent null constraints on GℓNNOOI, GℓNONOI, and GℓNOONI?”Agent: [reasoning Trace]P0B+KQ,R=[ ]∑UℓVWXYZ[\V"XY[YZR]=>Subtask 3: ……Gℓ0B+KI+−1QGℓ0K+BISub-Task 3: Among them what is the name of the person who was a keynote speaker at a conference in 2012?[Constraints: keynote speaker, conf. 2002]

Figure 2.MEMORYARENAsupports four distinct evaluation environments, where a memory-augmented task agent completes a sequence

of interdependent subtasks. Each subtask session involves multiple agent actions.

path up to the penultimate level (for example, televisions

from“Electronics >Television & Video >Televisions >TV

Mounts, Stands & Turntables”and TV mounts from“Elec-

tronics >Television & Video >Televisions >LED & LCD

TVs”fall under the same category tree). This procedure

yields coarse compatibility trees, serving as the structural

basis to design bundle shopping instructions.

We then apply a fine-grained filtering process based on

product features. We extract key attributes from product

descriptions and constructaccept–rejectmaps that encode

feature-level compatibility between product pairs using com-

monsense reasoning (e.g., a75-inch TVacceptsa stand with

70 inches longbut rejectsa 50-inch stand). These maps are

used to form chains of compatible products across sessions

and to generate auxiliary incompatible items as negative

distractors. Human annotators then manually verify all com-

patibility chains and remove invalid combinations. Finally,

annotators compose multi-session shopping instructions in

which each session presents a mixture of incompatible dis-

tractors, compatible candidates, and an additional selection

constraint (e.g., highest rating or highest price) to guarantee

a unique compatible item is satisfied. Solving each session

requires the agent to recall prior purchases, identify compati-

bility constraints, discard negative options, and select a valid

product. Using this process, we construct 150 representative

multi-session bundled shopping tasks as the final test set.

More details in data creation are in Appendix. A.2.1.

Compositional Information Seeking: Progressive Web

SearchWe evaluate an agent’s ability to accumulate and

reuse information across multiple search steps, where each

step introduces an additional searching condition, and the fi-nal answer must satisfy all previously introduced conditions.

Conceptually, this setting follows a form ofprogressive in-

formation seeking, in which a user begins with a coarse

specification of the target and incrementally adds new con-

straints over time, requiring the agent to retain and integrate

information acquired in earlier searches.

Our test data builds upon BrowseComp-Plus (Chen et al.,

2025). Starting from its 830 entries, we apply a two-stage

filtering and annotation process. First, we evaluate the origi-

nal entries using a large language model agent with access

to web search tools, and remove instances that the agent

can answer correctly in a single interaction. These filtered

instances are solvable without retaining or recalling any

information beyond the current prompt and tool responses,

i.e., they do not require storing, accumulating, or reusing

information across interactions and therefore place no de-

mand on long-term memory.

Interdependent Agentic Task Benchmarks

- The study implements a filtering phase to exclude queries solvable in one step, focusing purely on tasks requiring long-term memory.

- Researchers decompose complex queries into a series of subqueries that impose a strict causal ordering of information acquisition.

- The group travel benchmark models realistic logistics where participants join a trip incrementally and add potentially conflicting preferences.

- These traveler constraints create intricate dependency chains that require agents to reason about how new requests interact with previous decisions.

I want to stay at a hotel with at least a two-level higher rating than Rebecca’s.

iltering and annotation process. First, we evaluate the origi-

nal entries using a large language model agent with access

to web search tools, and remove instances that the agent

can answer correctly in a single interaction. These filtered

instances are solvable without retaining or recalling any

information beyond the current prompt and tool responses,

i.e., they do not require storing, accumulating, or reusing

information across interactions and therefore place no de-

mand on long-term memory. For the remaining instances,we

decompose each query into a group of subqueries, where

each subquery introduces one additional constraint. Note

that search conditions are listed in parallel in BrowseComp-

Plus. Therefore, all decomposed query groups undergo the

second verification by human annotators. Annotators first

assess whether the decomposition is semantically coherent,

has no repetition, or other mistakes, and identify the cor-

rect search result for each subquery conditioned only on

information available from preceding subqueries. If any

subquery is unanswerable under these constraints (for ex-

ample, if it depends on information introduced only in later

subqueries), the entire group is discarded. This process en-

forces a strict causal ordering among subqueries. Finally,

we retain 256 high-quality compositional search tasks with

dependent subqueries and annotated answers as the test set

4

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

in this task.

Preference-constrained Planning: Group TravelOur

environment models realistic group travel scenarios in which

an initial itinerary is planned by one traveler and additional

participants join incrementally. More realistically, while

group members may share common activities due to over-

lapping interests, they may also request individualized or

partial-group arrangements when preferences diverge. Sup-

porting such scenarios requires an agent to recall precisely

previous activities and traveler preferences, and to reason

about how new constraints interact with existing plans.

We build this environment based on TravelPlanner (Xie

et al., 2024), where a trip is represented as a sequence of

daily activity slots (e.g., 3 meals, accommodations, sight-

seeing). We start with 45 single-traveler instances with a

fully specified ground-truth itinerary. Then we transform

each instance into a group travel scenario by treating the

original traveler as a base participant with a fixed itinerary,

and sequentially adding 5 to 8 additional travelers.

New travelers, by default, follow the base itinerary as shared

group travel, but may specify personalized constraints that

modify individual activity slots. These constraints take

one of two forms. JOIN constraints specify that a traveler

wishes to share a particular activity with another previously

joined member (e.g., “I want to have dinner with Rebecca

on the second day”), requiring the planning agent to assign

the same activity choice to the later traveler. RELATION

constraints define preferences relative to another member’s

choice, expressed through comparisons along attributes such

as price, rating, cuisine, room type, or house rules (e.g., “I

want to stay at a hotel with at least a two-level higher rating

than Rebecca’s”).

All constraints are carefully designed to progressively nar-

row the feasible candidate set and guaranteea unique valid

solutionin the underlying database. In total, we construct

270 group travel planning instances, where each traveler

may reference or join any previous plans, forming depen-

dency chains of up to depth four.

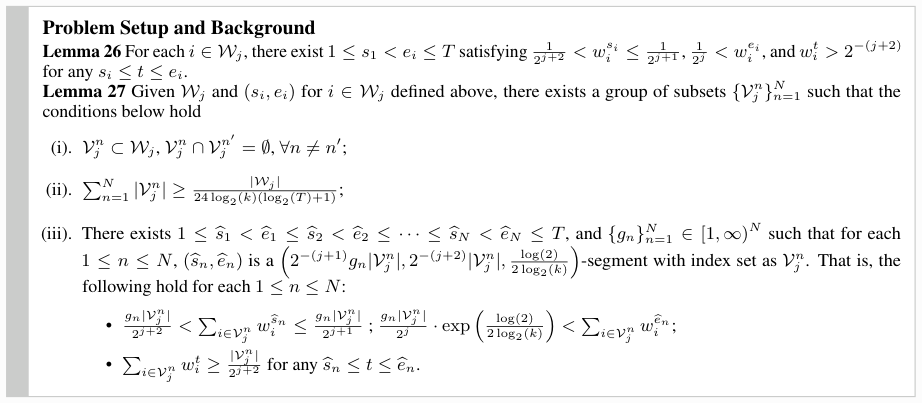

Sequential Formal Reasoning: Math & PhysicsThe

Formal Mathematical Reasoning environment is designed to

reflect the structure and difficulty of research-level reason-

ing in scientific papers. Unlike standard math benchmarks

that emphasize short, self-contained problems (e.g.

Sequential Reasoning and Agent Memory

- A new environment for formal reasoning uses research-level math and physics papers to test agents on long-context, multi-step logical arguments.

- Expert PhDs decomposed complex claims into ordered sequences of lemmas and propositions, ensuring that every statement is causally consistent with prior results.

- The test set includes 60 problems that mirror the structure of academic derivations, requiring the reuse of established conclusions over deep dependency chains.

- Evaluation differentiates between single-session tasks and multi-session interactions where agents must carry over information across temporally isolated steps.

- This benchmarking framework addresses the need for persistent memory in agents that must complete interdependent subtasks sequentially.

Verifying a single claim often requires pages of derivations and careful reuse of previously established conclusions, making this setting a natural testbed for evaluating long-term memory and multi-step formal reasoning.

eea unique valid

solutionin the underlying database. In total, we construct

270 group travel planning instances, where each traveler

may reference or join any previous plans, forming depen-

dency chains of up to depth four.

Sequential Formal Reasoning: Math & PhysicsThe

Formal Mathematical Reasoning environment is designed to

reflect the structure and difficulty of research-level reason-

ing in scientific papers. Unlike standard math benchmarks

that emphasize short, self-contained problems (e.g., AIME),

major theoretical claims in fields such as learning theory and

differential geometry typically depend on long-context ar-

guments involving multiple intermediate results, definitions,

and lemmas. Verifying a single claim often requires pages of

derivations and careful reuse of previously established con-

clusions, making this setting a natural testbed for evaluating

long-term memory and multi-step formal reasoning.

To construct this environment, we assemble a data creationteam of senior PhD-level experts in theoretical mathemat-

ics and physics to manually curate and annotate academic

papers with long and structured derivations. Experts review

the papers, select those whose central claims rely on ex-

tended chains of prior results, and decompose each central

claim into an ordered sequence of intermediate statements

(primarily lemmas and propositions) following the original

structure of the source paper. Similarly, papers are discarded

if the derivation lacks strict causal consistency, meaning that

any statement depends on information introduced later in the

argument. For each remaining paper, experts record all nec-

essary background required to justify each statement, such

as notations, definitions, remarks, and algorithms. Each

intermediate and final statement is then framed as a ques-

tion with an expert-verified ground-truth answer, and the

complete reasoning trajectory is recorded. Statements that

are not naturally verifiable (e.g., existence assumptions) are

provided as fixed facts to support subsequent reasoning.

The final test set consists of 40 multi-question problems

in mathematics and 20 in physics, each corresponding to

a full derivation chain extracted from real research papers.

The expert-curated derivation chains ensure high quality and

introduce challenges well beyond existing math benchmarks,

making this environment a rigorous test of both long-context

memory and formal reasoning.

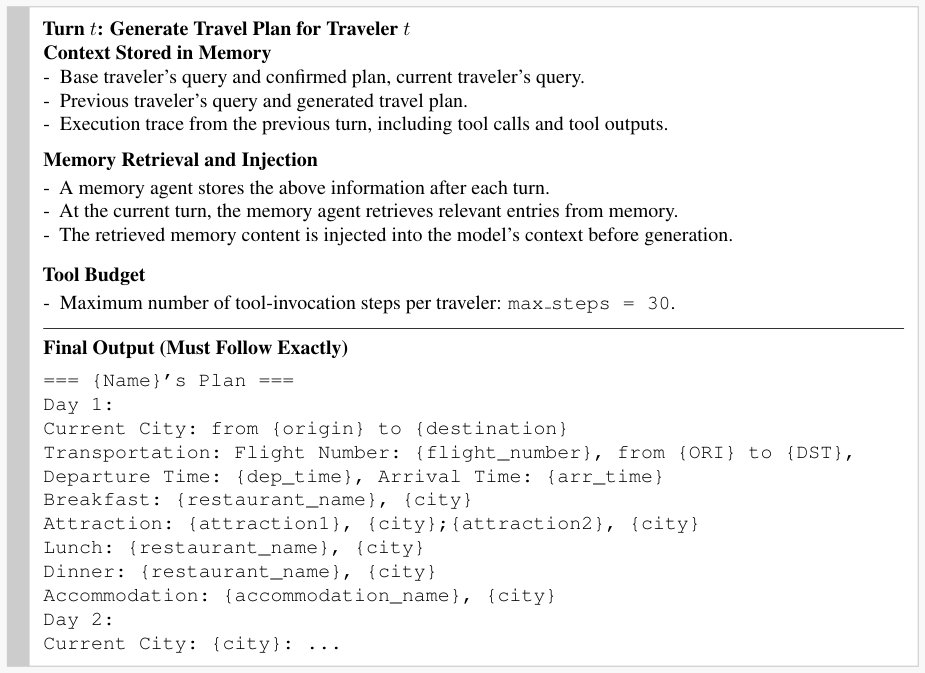

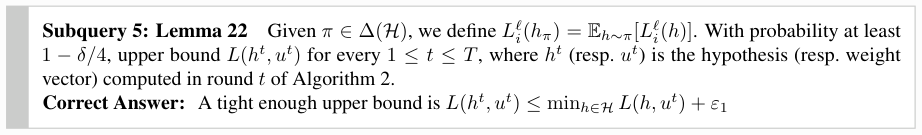

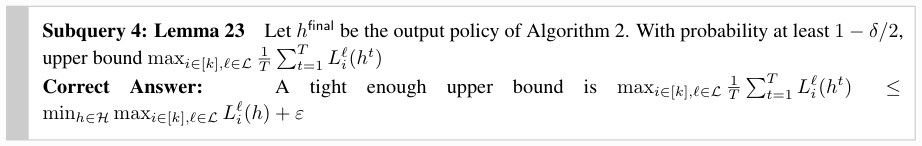

3.2. Evaluation: Memory-Agent-Environment Loop

Single-Session Agent-Environment Interactions.When

anLLM agent Ainteract with anenvironment Eover certain

agentic task s(e.g., buy a camera lens), the agent Ainteracts

withEover a sequence of steps indexed by t= 1, ..., T i.

At each step t, the agent selects an action (e.g., search the

camera lens name) from its action space conditioned on

the current instruction and the interaction history within the

session, and the environment responds with an observation

(e.g., show search results):

ai,t∼πA(·|s, o i,1:t−1 , ai,1:t−1 ), o it∈ O(1)

In single-session tasks, the agent usually is provided with

the complete interaction history (trace) as context at every

step, until the task is terminated (e.g., after purchasing a

camera lens).

Multi-session Agent-Environment Interactions.In real

cases, a task may have multiple subtasks S={s i}n

i=1, and

subtasks are executed sequentially: [s1→s 2→ ··· →s n].

Using bundled web shopping as an example (e.g., buy a

camera body with lens and cases), each subtask siis exe-

cuted as a separatesession2(e.g., first buy a camera body).

While each session is temporally isolated, later subtasks may

2Unless otherwise specified, we use the wordsessionandsub-

taskinterchangeably

5

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

depend on information acquired in earlier ones (e.g., the

version of the camera body bought before must be known

when buying lens), motivating the need for a persistent state

across sessions.

Persistent Memory-Agent Loops

- Complex agentic tasks are often divided into subtasks executed in separate, temporally isolated sessions.

- A persistent memory system is required to bridge these sessions, ensuring information like previous purchases or decisions informs future actions.

- The Memory-Agent-Environment Loop formalizes the process through two core functions: retrieval of task-relevant data and the updating of memory after subtask completion.

- While single-session agents can rely on implicit history, multi-session agents lose access to interaction traces once a session terminates, making explicit memory mandatory.

- This framework allows various memory architectures, such as RAG systems or long-context buffers, to influence an agent's memory-conditioned policy.

Task-relevant information must be selectively stored and retrieved through a persistent memory system in order to support decision-making in later subtasks.

nd cases), each subtask siis exe-

cuted as a separatesession2(e.g., first buy a camera body).

While each session is temporally isolated, later subtasks may

2Unless otherwise specified, we use the wordsessionandsub-

taskinterchangeably

5

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

depend on information acquired in earlier ones (e.g., the

version of the camera body bought before must be known

when buying lens), motivating the need for a persistent state

across sessions.

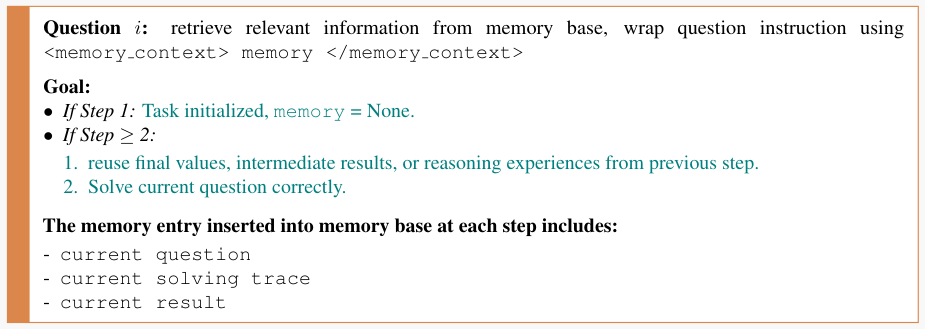

Final: Memory-Agent-Environment Loop.We equip

the agent Awith a persistent memory system M, which

stores information across subtask sessions and is initialized

as empty at the beginning of each evaluation episode. M

can be a long-context buffer, a RAG system, or another

memory agent. Usually, a memory system defines the two

abstract functions3: (1)retrievalwhich returns task-relevant

memory given a query, and (2)updatewhich incorporates

information from a completed subtask intoM.

At each action step tin subtask si, the agent retrieves rele-

vant memory based on the current subtask, and actions are

selected according to a memory-conditioned policy:

mi,t=RETRIEVE(M, s i, ai,1:t−1 , oi,1:t−1 ).(2)

ai,t∼πA(·|si, oi,1:t−1 , ai,1:t−1 , mi,t)(3)

Upon subtask completion, the memory system is updated

as:

M ←UPDATE(M,(o i,1:T, ai,1:T))(4)

The updated memory is carried forward to the next subtask

si+1, enabling information acquired in earlier sessions to

influence future decision-making. We call it theMemory-

Agent-EnvironmentLoop.

In single-session execution, the agent–environment interac-

tion implicitly follows a Memory-Agent-Environment loop,

as the history of interactions added in the context of each

action step can be viewed as the working memory of a single

session. In such settings, persistent memory is not strictly

required. In contrast, in multi-session settings, subtasks

are executed in separate sessions whose interaction traces

are no longer directly accessible once a session terminates.

Task-relevant information must be selectively stored and

retrieved through a persistent memory system in order to

support decision-making in later subtasks. This explicitly

enforces the Memory-Agent-Environment loop when the

cumulative interaction trace spans multiple sessions and

exceeds the scope of single-session context.

4. Experiments

4.1. Experimentation Setup

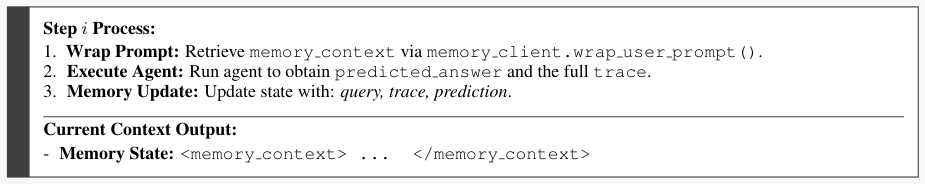

Following prior setups (Wu et al., 2025; Hu et al., 2025b),

agents equipped with Mhas three representative paradigms

3If the memory system is a long-context buffer, the retrieval

function returns a concatenation of all past history, and the update

function just appends the interactions of the current session into

the buffer.

/uni00000014 /uni00000015 /uni00000016 /uni00000017 /uni00000018 /uni00000019

/uni00000023/uni0000002e/uni00000013/uni00000015/uni00000013/uni00000017/uni00000013/uni00000019/uni00000013/uni0000001b/uni00000013/uni00000014/uni00000013/uni00000013/uni00000036/uni00000058/uni00000046/uni00000046/uni00000048/uni00000056/uni00000056/uni00000003/uni00000035/uni00000044/uni00000057/uni00000048/uni00000003/uni0000000b/uni00000008/uni0000000c

(a)Bundled Web Shopping@k

/uni00000014 /uni00000015 /uni00000016 /uni00000017 /uni00000018 /uni00000019 /uni0000001a /uni0000001b

/uni00000023/uni0000002e/uni00000013/uni00000015/uni00000013/uni00000017/uni00000013/uni00000019/uni00000013/uni00000036/uni00000058/uni00000046/uni00000046/uni00000048/uni00000056/uni00000056/uni00000003/uni00000035/uni00000044/uni00000057/uni00000048/uni00000003/uni0000000b/uni00000008/uni0000000c

(b)Group Travel Plan@k

/uni00000014 /uni00000015 /uni00000016 /uni00000017 /uni00000018 /uni00000019

/uni00000023/uni0000002e/uni00000013/uni00000015/uni00000013/uni00000017/uni00000013/uni00000019/uni00000013/uni0000001b/uni00000013/uni00000014/uni00000013/uni00000013/uni00000036/uni00000058/uni00000046/uni00000046/uni00000048/uni00000056/uni00000056/uni00000003/uni00000035/uni00000044/uni00000057/uni00000048/uni0

Benchmarking Agent Memory Systems

- The MEMORYARENA benchmark evaluates how AI agents handle long-term dependencies across multiple interactive sessions.

- Agents are categorized by their memory architecture, including long-context buffers (0D), external memory with abstraction (1D), and RAG-based systems.

- The 0D memory method stores raw, verbatim history without consolidation, while 1D and 2D methods introduce learned or heuristic distillation mechanisms.

- Experimental results show a consistent decay in success rates as task dependencies span more sessions, suggesting a limit to current agent sustainability.

- High-end models like GPT-5.1-mini and Claude-Sonnet-4.5 were tested alongside specialized memory frameworks like MemGPT and GraphRAG.

The decay trend indicates agents cannot sustain execution as dependencies span more sessions.

00000044/uni00000057/uni00000048/uni00000003/uni0000000b/uni00000008/uni0000000c

(b)Group Travel Plan@k

/uni00000014 /uni00000015 /uni00000016 /uni00000017 /uni00000018 /uni00000019

/uni00000023/uni0000002e/uni00000013/uni00000015/uni00000013/uni00000017/uni00000013/uni00000019/uni00000013/uni0000001b/uni00000013/uni00000014/uni00000013/uni00000013/uni00000036/uni00000058/uni00000046/uni00000046/uni00000048/uni00000056/uni00000056/uni00000003/uni00000035/uni00000044/uni00000057/uni00000048/uni00000003/uni0000000b/uni00000008/uni0000000c

(c)Progressive Web Search@k

/uni00000014 /uni00000015 /uni00000016 /uni00000017 /uni00000018 /uni00000019 /uni0000001a /uni0000001b

/uni00000023/uni0000002e/uni00000013/uni00000015/uni00000013/uni00000017/uni00000013/uni00000019/uni00000013/uni0000001b/uni00000013/uni00000014/uni00000013/uni00000013/uni00000036/uni00000058/uni00000046/uni00000046/uni00000048/uni00000056/uni00000056/uni00000003/uni00000035/uni00000044/uni00000057/uni00000048/uni00000003/uni0000000b/uni00000008/uni0000000c

(d)Formal Reasoning@k

Figure 3.Success Rate at subtask epth k. The decay trend indi-

cates agents cannot sustain execution as dependencies span more

sessions.

in MEMORYARENA:Agents with Long-context buffers

(Long-Context Agent)which append verbatim interaction

history directly before the prompt before each subtask with-

out explicit abstraction or consolidation, working as an in-

context memory. We include GPT-5.1-mini, GPT-4.1-mini,

and Gemini-3-flash, Claude-Sonnet-4.5.Agents with Exter-

nal Memory, where the agents maintain an external mem-

ory with learned or curated mechanisms for information ab-

straction, consolidation, and retrieval. We include four main-

stream agents with external memory: MemGPT (Packer

et al., 2023), Mem0 and its graph version Mem0-g (Chhikara

et al., 2025), and ReasoningBank (Ouyang et al., 2025).

Agents with Retrieval-augmented generation (RAG) sys-

tems, which use an indexed document store to store past

information and then access it via retrieval. We consider

different retrieval methods, including BM25, an embedding-

based RAG method that retrieves based on semantic similar-

ity (using OpenAI text-embedding-3-small ), and

two structured RAG approaches, MemoRAG (Qian et al.,

2025) and GraphRAG (Edge et al., 2024), in our evaluation.

Inspired by Hu et al. (2025a), we further characterize above

methods by the structure and complexity of its memory de-

sign, to guide our experiment analysis.0Dmemory method

stores raw history without abstraction or consolidation. This

includes verbatim context used by long-context agents and

flat RAG methods such as BM25 and embedding-based

RAG.1Dmemory method introduces learned or heuristic

mechanisms for consolidating and distilling information,

while maintaining a flat memory structure. Examples in-

6

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

Formal ReasoningBundled

web shoppingGroup

Travel PlaningProgressive

Web Search Math Phys Memory

TypeSR PS SR PS sPS SR PS SR PS SR PSAll

Task

Avg SR

Task agent + Long Context

GPT-5.1-mini0D 0.01 0.58 0.00 0.00 0.52 0.06 0.05 0.26 0.38 0.45 0.6 0.16

GPT-4.1-mini0D 0.00 0.43 0.00 0.00 0.19 0.02 0.03 0.19 0.34 0.4 0.55 0.12

Gemini-3-Flash0D 0.120.76 0.00 0.01 0.62 0.07 0.04 0.16 0.30 0.5 0.55 0.17

Claude-Sonnet-4.50D 0.12 0.790.00 0.060.44 0.02 0.03 0.29 0.31 0.50 0.60 0.19

Long Context Avg 0.06 0.64 0.00 0.02 0.44 0.04 0.04 0.23 0.33 0.46 0.58

Task Agent + Memory Agents

Letta1D 0.00 0.5 0.00 0.00 0.35 0.16 0.09 0.13 0.31 0.45 0.65 0.15

Mem01D 0.00 0.45 0.00 0.00 0.24 0.24 0.09 0.19 0.34 0.25 0.43 0.14

Mem0-g2D 0.00 0.43 0.00 0.00 0.30 0.15 0.08 0.19 0.32 0.25 0.50 0.12

Reasoning Bank1D 0.00 0.27 0.00 0.00 0.00 0.10 0.06 0.230.350.25 0.45 0.12

Memory Avg 0.00 0.41 0.00 0.00 0.25 0.15 0.08 0.18 0.33 0.30 0.51

Task Agent + RAG Systems

BM250D 0.00 0.56 0.00 0.01 0.45 0.280.09 0.23 0.390.45 0.58 0.19

Text-Embedding-3-Small0D 0.00 0.55 0.00 0.01 0.50 0.23 0.09 0.320.36 0.6 0.7 0.

Benchmarking AI Memory Systems

- Researchers evaluated various memory systems, including flat, structured, and RAG-based models, within the challenging MEMORYARENA benchmark.

- The study introduces the Task Progress Score (PS) to quantify partial success by measuring the fraction of completed subtasks within a larger objective.

- A significant gap exists between subtask progress and total success, revealing that agents can often solve individual pieces but fail to integrate them into a cohesive whole.

- Group Travel Planning proved to be the most difficult environment, with nearly all methods resulting in near-zero success rates due to complex, interdependent constraints.

- The findings suggest that current AI agents struggle significantly with tasks that require maintaining global consistency across multiple sessions.

This pattern suggests that while agents can make some progress on individual subtasks, they fail to integrate these partial successes into globally consistent solutions dramatically.

0.5 0.00 0.00 0.35 0.16 0.09 0.13 0.31 0.45 0.65 0.15

Mem01D 0.00 0.45 0.00 0.00 0.24 0.24 0.09 0.19 0.34 0.25 0.43 0.14

Mem0-g2D 0.00 0.43 0.00 0.00 0.30 0.15 0.08 0.19 0.32 0.25 0.50 0.12

Reasoning Bank1D 0.00 0.27 0.00 0.00 0.00 0.10 0.06 0.230.350.25 0.45 0.12

Memory Avg 0.00 0.41 0.00 0.00 0.25 0.15 0.08 0.18 0.33 0.30 0.51

Task Agent + RAG Systems

BM250D 0.00 0.56 0.00 0.01 0.45 0.280.09 0.23 0.390.45 0.58 0.19

Text-Embedding-3-Small0D 0.00 0.55 0.00 0.01 0.50 0.23 0.09 0.320.36 0.6 0.7 0.23

MemoRAG1D 0.00 0.54 0.00 0.03 0.50 0.22 0.210.23 0.390.50 0.67 0.19

GraphRAG2D 0.00 0.52 0.00 0.01 0.51 0.04 0.05 0.26 0.390.55 0.63 0.17

RAG Avg 0.00 0.54 0.00 0.02 0.49 0.19 0.11 0.26 0.38 0.53 0.65

All Method Avg0.02 0.52 0.00 0.02 0.38 0.23 0.09 0.22 0.35 0.42 0.57

Table 3.Main results on task agent (gpt-5.1-mini) with long-context memory, memory agent, and RAG agent over four agentic

environments MEMORYARENA. Weboldthe global best methods and underline the group best ones within each category.0D: raw

context without any processing;1D: flat memory,2D: structured memory.SR: Success Rate.PS: Process Score (defined in Section 4.2).

sPS: soft Process Score (we provided sPS here for more informative compression as PS is all near-zero in Group Travel Planning. See

Section 4.3 for more details.)

clude MemGPT (Packer et al., 2023), Mem0 (Chhikara et al.,

2025), ReasoningBank (Ouyang et al., 2025), and mem-

oRAG (Qian et al., 2025).2Dmemory methods incorporate

structured memory, including components like or tree/graph-

based relational representations (e.g., MemGPT (Packer

et al., 2023), GraphRAG (Edge et al., 2024)).

All evaluation results are reported with GPT-5.1-mini as the

task agent equipped with different memory systems (long-

context, RAG systems or memory systems).

4.2. Evaluation Metrics

We define the TaskProgress Score(PS) to measure how

many subtasks are completed within a task. PS captures the

fraction of subtasks that are correctly completed within a

task, providing a fine-grained signal of partial progress even

when full task success is not achieved. Formally, consider

a test set of Ntasks ({S1, S2,···, S N})where each task

consists of |Si|ordered substask ( Si= [s 1, s2,···, s |Si|]).

Let|spass

i|denote the number of passed subtasks in Si, the

overall Progress Score is computed as the aggregated task-

level Progress Score:

PSSi=|spass

i|

|Si|,PS=1

NNX

iPSSi (5)We also report the Task Success Rate (SR), which measures

the percentage of tasks that are fully solved. In Bundled Web

Shopping and Group Travel Planning, a task is successful

if the final bundle or plan satisfies all group members. In

Progressive Web Search and Formal Reasoning, success is

determined by the correctness of the final subtask, which is

the concluding search query or the major math or physics

problems.

4.3. Main Results

Overall Results and Task Difficulty.Table 3 reports the

Task Success Rate (SR) and Task Progress Score (PS) across

environments. Overall, all methods achieve low SR and PS,

with two environments exhibiting near-zero SR, indicating

that MEMORYARENAposes a challenging evaluation setting.

Examining the gap between SR and PS, we find that most

methods have much higher PS than SR (except in Group

Travel Planning with both near zero). This pattern suggests

that while agents can make some progress on individual

subtasks, they fail to integrate these partial successes into

globally consistent solutions dramatically.

Group Travel Planning remains the most challenging envi-

ronment in MEMORYARENA, with both SR and PS near zero

across all methods. Here each subtask requires planning a

30-slot itinerary, where every slot is governed by constraints

such as joining a group activity, coordinating an activity with

7

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

one or more participants, or selecting an individual activity

that depends on earlier decisions.

Memory Limits in Agent Tasks

- Group Travel Planning is the most difficult task due to its 30-slot itineraries and complex interdependent constraint chains.

- External memory and RAG systems do not consistently outperform raw long-context history due to representation and training mismatches.

- External memory is primarily helpful when tasks push agents beyond their reasoning capacity, reducing noise and attention saturation.

- Agent performance inevitably decays as subtask depth increases, showing that current models cannot sustain execution over deeply interdependent sessions.

Consequently, pairing strong long-context agents with external memory does not reliably produce a “1 + 1>2” effect.

onsistent solutions dramatically.

Group Travel Planning remains the most challenging envi-

ronment in MEMORYARENA, with both SR and PS near zero

across all methods. Here each subtask requires planning a

30-slot itinerary, where every slot is governed by constraints

such as joining a group activity, coordinating an activity with

7

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

one or more participants, or selecting an individual activity

that depends on earlier decisions. Successfully completing

the itinerary demands accurate recall of previously specified

preferences and long-horizon reasoning over interdependent

constraint chains across slots, placing strong requirements

on both memorization and long-chain reasoning that remain

beyond the capabilities of current agents.

To enable informative comparison in Group Travel Planning

(as hard SR and PS are zero for all methods), we addition-

ally report a soft Progress Score (sPS), where each subtask

receives partial credit based on the fraction of constraints it

satisfies. Task-level soft progress is computed by averaging

subtask sPS, with overall sPS averaged across tasks. We

use sPS when discussing Group Travel Planning in later

analysis.

External Memory and RAG Systems Are Not Univer-

sally Beneficial.We find that augmenting GPT-5-mini

with external memory or RAG does not consistently outper-

form using the model’s full long-context history alone. We

attribute this outcome to two forms of mismatch. First, a

representation mismatch: long-context agents reason over

a self-consistent, verbatim interaction history, whereas ex-

ternal memory systems typically return compressed, seg-

mented, or reordered information that may not align well

with in-context learning over raw context. Second, atrain-

ing mismatch: external memory systems are not jointly

optimized with the task agent, leaving the agent suboptimal

at formulating effective queries and integrating retrieved in-

formation into its reasoning process. Consequently, pairing

strong long-context agents with external memory does not

reliably produce a “1 + 1>2” effect.

When External Memory Helps.As shown in Table 3,

external memory yields consistent performance gains in

Progressive Web Search and Formal Reasoning. In Pro-

gressive Web Search, individual subtask traces can exceed

120k tokens, while in Formal Reasoning, subtasks require

highly complex and domain-specific reasoning. Both set-

tings push the agent beyond its effective reasoning capacity

when conditioned on long contexts alone. In such regimes,

long-context prompts are susceptible to attention satura-

tion and error accumulation, as early mistakes persist in

the context and propagate to later decisions. External mem-

ory mitigates these failure modes by selectively abstracting,

distilling, and retaining task-relevant information, thereby

reducing noise and alleviating attention saturation.

4.4. Results on Interdependent Subtasks

We analyze agent performance under increasing subtask in-

terdependency using SR at subtask depth k(@k), defined as

the fraction of task instances that are correctly completed at

thek-th subtask. This metric characterizes how well agentssustain execution as dependencies span more sessions.

As shown in Figure 3, all evaluated methods exhibit a decay

with no method maintaining a consistent flat region across

environments. This observation suggests that neither long-

context models nor existing external memory or retrieval

mechanisms are sufficient to reliably support agent long-

horizon execution over deeply interdependent subtasks.

The rate of decay, however, varies across task settings. In

Progressive Web Search, where each session induces sub-

stantially longer reasoning traces, ( >122k ) long-context

agents degrade more rapidly as kincreases, as context can

go beyond effective context window more easily.

Agent Memory Performance Trade-offs

- Neither long-context models nor external memory systems currently provide reliable support for long-horizon execution of interdependent tasks.

- Retrieval-based (RAG) systems prove more robust than external memory for tasks requiring the precise reuse of specific historical data.

- Long-context agents exhibit the lowest latency and remain competitive, whereas external memory systems incur the highest time costs.

- There is no systematic relationship between the complexity of a memory mechanism and its actual execution latency in practice.

- The MEMORYARENA environment models multi-session tasks as partially observable processes where the full state remains hidden from the agent.

Across sessions, the agent never directly observes the full underlying task state (e.g., the latent bundle specification, the evolving set of group constraints, or the intermediate dependencies required by later subtasks).

nts. This observation suggests that neither long-

context models nor existing external memory or retrieval

mechanisms are sufficient to reliably support agent long-

horizon execution over deeply interdependent subtasks.

The rate of decay, however, varies across task settings. In

Progressive Web Search, where each session induces sub-

stantially longer reasoning traces, ( >122k ) long-context

agents degrade more rapidly as kincreases, as context can

go beyond effective context window more easily. In con-

trast, agents augmented with external memory or retrieval

exhibit slower decay, as these systems re-surface relevant in-

formation from earlier subtasks when the accumulated trace

becomes not accessible directly. In tasks that require precise

reuse of earlier subtask information, such as recalling in-

termediate results in formal reasoning or referencing exact

activities and time slots in group travel planning, retrieval-

based approaches are consistently more robust than agents

with external memory that rely on heavier information con-

solidation and abstraction. In these cases,agents with RAG

systems exhibit slower decay in SR@ kthan that with exter-

nal memory.

4.5. Latency Evaluations

Bundled

Web

ShoppingGroup

Travel

PlanProgressive

Web

SearchFormal

Reasoning

MathFormal

Reasoning

Phys.Avg.

Long Context

GPT-5.1-mini 95 119 60 50 47 74.2

GPT-4.1-mini 31 63 22 21 31 33.6

Claude-Sonnet-4.5 56 52 180 83 38 81.8

Gemini-3-Flash 78 33 42 43 65 52.2

Memory Systems

Letta 219 150 121 77 97 132.8

Mem0 109 125 229 49 62 114.8

Mirix 83 184 90 69 69 99.0

Mem0-g 112 194 230 40 50 125.2

Reasoning Bank 216 146 76 64 75 115.4

RAG Systems

BM25 134 162 149 41 51 107.4

Text Embeddings 127 90 196 58 64 107.0

MemoRAG 101 192 80 64 77 102.8

GraphRAG 96 108 119 58 70 90.2

Table 4.Latency of agents with different memory paradigms (sec.).

In Table 4, we additionally report subtask completion time

as a diagnostic measure of end-to-end execution latency for

agents equipped with different memory mechanisms (ad-

ditional statistics are provided in Appendix C.1). Overall,

agents with external memory always incur the highest la-

tency, with retrieval-based systems falling in between, while

long-context agents consistently exhibit the lowest latency

across environments. Notably, long-context agents achieve

this efficiency while remaining competitive in task perfor-

mance in several settings (see Section 4.3).

Across both agents with external memory and agents with

RAG systems, we do not observe a systematic relationship

8

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

between memory operation complexity and execution la-

tency. More complex memory mechanisms (e.g.,2D) do not

necessarily incur higher task execution time, nor do simpler

designs (e.g., 0D) consistently yield better efficiency. Sub-

stantial latency variation also exists among methods with

similar memory architectures, indicating that operational

complexity alone is not a reliable predictor of end-to-end

latency.

These findings suggest that, beyond jointly optimizing

memory mechanisms and task agents for functional inte-

gration, future work should explicitly consider the trade-

offs between memory effectiveness and execution la-

tency—especially in multi-session agentic settings where

memory is repeatedly accessed.

4.6. MEMORYARENAas a POMDP Testbed

We view the multi-session agent-environment loop in MEM-

ORYARENAas a natural instance of apartially observable

Markov decision process(POMDP). Across sessions, the

agent never directly observes the full underlying task state

(e.g., the latent bundle specification, the evolving set of

group constraints, or the intermediate dependencies required

by later subtasks). Instead, at each session it receives a par-

tial observation consisting of the current subtask instruction

and environment feedback.

Memory as Belief-State Estimation

- MEMORYARENA frames multi-session agent interaction as a partially observable Markov decision process (POMDP) where agents lack direct access to full task states.

- The study identifies 'belief drift' as a primary cause of performance decay, where small errors in state estimation accumulate across sessions to ruin final outcomes.

- External memory is theorized as a tool for belief-state estimation, yet current state-of-the-art systems fail to support the rigorous state tracking required for complex tasks.

- Failures stem from two bottlenecks: memory systems optimized for generic recall rather than state updates, and agents untrained in using memory as structured cues for planning.

- The authors conclude that memory should be evaluated as a functional component of an agentic loop rather than through simple, isolated retrieval benchmarks.

small errors in the agent’s implicit state estimate accumulate across sessions and eventually dominate downstream decisions

We view the multi-session agent-environment loop in MEM-

ORYARENAas a natural instance of apartially observable

Markov decision process(POMDP). Across sessions, the

agent never directly observes the full underlying task state

(e.g., the latent bundle specification, the evolving set of

group constraints, or the intermediate dependencies required

by later subtasks). Instead, at each session it receives a par-

tial observation consisting of the current subtask instruction

and environment feedback. Whenno external memory is

provided, the agent must rely on a truncated interaction

trace (or its internal parametric knowledge), making the

decision process effectively partially observable and history-

dependent.

This perspective yields a two-step connection to view MEM-

ORYARENAas a POMDP-oriented testbed. First, MEM-

ORYARENAexposes long-horizon partial observability in

multi-session tasks, where performance decay with depth

can be interpreted asbelief drift: small errors in the agent’s

implicit state estimate accumulate across sessions and even-

tually dominate downstream decisions, as shown in Figure 3.

Second, external memory in MEMORYARENAcan be inter-

preted asan explicit mechanism for approximating belief-

state estimation. In an idealized setting, anoptimalmemory

base that returns all and only the information necessary to

infer the current belief state (i.e., the task-relevant sufficient

statistics from past sessions) should enable an agent policy

to act as if it were operating in a fully observed MDP (or,

equivalently, to solve the underlying POMDP via a belief-

MDP reduction). However, our empirical results show that

current state-of-the-art memory systems and RAG systems

still yield low Task SR, indicating that current SOTA mem-

ory does not reliably support the kind ofstate tracking

required by the agent POMDP.

These results suggest two complementary bottlenecks. From

memory-side: contemporary memory mechanisms, oftenoptimized for generic recall, compression, or semantic-

similarity retrieval, have limited capacity to preserve and

update task-relevant state variables that are sufficient for

belief trackingunder a task’s dependency. Fromagent-

side: task agents are not trained to query, interpret, and

integrate memory outputs as structured cues forbelief up-

dates, which can lead to under-utilization or mis-utilization

of retrieved information. These motivate future work that

jointly optimizes memory representations and agent training

objectives with explicit awareness of POMDP state estima-

tion for long-horizon planning.

5. Conclusions

We introduce MEMORYARENA, an evaluation gym for agent

memory with curated multi-session tasks featuring interde-

pendent subtasks, designed to assess whether memory can

effectively support agent decision-making within a mem-

ory–agent–environment execution loop. Moving beyond

recall-based memory benchmarks and single-session agent

evaluations, MEMORYARENAtreats memory as a functional

component of agentic tasks. Empirically, state-of-the-art

agent memory methods achieve low success rates in MEM-

ORYARENA, revealing persistent challenges in maintaining

and reusing memory across interdependent sessions and un-

derscoring the need for testbeds that evaluate memory as a

functionally coherent component of LLM agents.

Impact Statement

This paper presents work whose goal is to advance the field

of Machine Learning. There are many potential societal

consequences of our work, none of which we feel must be

specifically highlighted here.

References

Ai, Q., Tang, Y ., Wang, C., Long, J., Su, W., and Liu, Y .

Memorybench: A benchmark for memory and continual

learning in llm systems.arXiv preprint arXiv:2510.17281,

2025.

Akshathala, S., Adnan, B., Ramesh, M., Vaidhyanathan,

K., Muhammed, B., and Parthasarathy, K. Beyond task

completion: An assessment framework for evaluating

agentic ai systems.arXiv preprint arXiv:2512.12791,

2025.

An, C., Gong, S., Zhong, M., Zhao, X., Li, M., Zhang, J.,

Kong, L.

Benchmarking AI Agent Memory

- The academic references catalog a wide range of new benchmarks focused on memory and long-context capabilities for large language model agents.

- Current research is shifting from simple task completion metrics toward evaluating multi-session interdependence and episodic memory.

- System assessments like Agencybench are now testing the limits of autonomous agents using contexts as large as one million tokens.

- Innovative tools such as 'agent-as-a-judge' are being proposed to provide more transparent and automated evaluations of agentic search.

- The documents cite various projects aimed at making AI memory production-ready and scalable for real-world software engineering and web tasks.

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

be

specifically highlighted here.

References

Ai, Q., Tang, Y ., Wang, C., Long, J., Su, W., and Liu, Y .

Memorybench: A benchmark for memory and continual

learning in llm systems.arXiv preprint arXiv:2510.17281,

2025.

Akshathala, S., Adnan, B., Ramesh, M., Vaidhyanathan,

K., Muhammed, B., and Parthasarathy, K. Beyond task

completion: An assessment framework for evaluating

agentic ai systems.arXiv preprint arXiv:2512.12791,

2025.

An, C., Gong, S., Zhong, M., Zhao, X., Li, M., Zhang, J.,

Kong, L., and Qiu, X. L-eval: Instituting standardized

evaluation for long context language models. InProceed-

ings of the 62nd Annual Meeting of the Association for

Computational Linguistics (Volume 1: Long Papers), pp.

14388–14411, 2024.

9

Benchmarking Agent Memory in Interdependent Multi-Session Agentic Tasks

Bai, Y ., Lv, X., Zhang, J., Lyu, H., Tang, J., Huang, Z.,

Du, Z., Liu, X., Zeng, A., Hou, L., et al. Longbench: A

bilingual, multitask benchmark for long context under-

standing. InProceedings of the 62nd annual meeting of

the association for computational linguistics (volume 1:

Long papers), pp. 3119–3137, 2024.

Chen, Z., Ma, X., Zhuang, S., Nie, P., Zou, K., Liu, A.,

Green, J., Patel, K., Meng, R., Su, M., et al. Browsecomp-

plus: A more fair and transparent evaluation benchmark

of deep-research agent.arXiv preprint arXiv:2508.06600,

2025.

Chhikara, P., Khant, D., Aryan, S., Singh, T., and Yadav, D.

Mem0: Building production-ready ai agents with scalable

long-term memory.arXiv preprint arXiv:2504.19413,

2025.

Deng, X., Gu, Y ., Zheng, B., Chen, S., Stevens, S., Wang,

B., Sun, H., and Su, Y . Mind2web: Towards a general-

ist agent for the web.Advances in Neural Information

Processing Systems, 36:28091–28114, 2023.

Deshpande, D., Gangal, V ., Mehta, H., Kannappan, A.,

Qian, R., and Wang, P. Memtrack: Evaluating long-term

memory and state tracking in multi-platform dynamic

agent environments.arXiv preprint arXiv:2510.01353,

2025.

Edge, D., Trinh, H., Cheng, N., Bradley, J., Chao, A., Mody,

A., Truitt, S., and Larson, J. From local to global: A graph

rag approach to query-focused summarization.arXiv

preprint arXiv:2404.16130, 2024.

Fang, S., Wang, Y ., Liu, X., Lu, J., Tan, C., Chen, X., Huang,

Y . Z., Qiu, X., et al. Agentlongbench: A controllable

long benchmark for long-contexts agents via environment

rollouts.arXiv preprint arXiv:2601.20730, 2026.

Gou, B., Huang, Z., Ning, Y ., Gu, Y ., Lin, M., Qi, W.,

Kopanev, A., Yu, B., Guti ´errez, B. J., Shu, Y ., et al.

Mind2web 2: Evaluating agentic search with agent-as-a-

judge.arXiv preprint arXiv:2506.21506, 2025.

Hsieh, C.-P., Sun, S., Kriman, S., Acharya, S., Rekesh, D.,

Jia, F., Zhang, Y ., and Ginsburg, B. Ruler: What’s the

real context size of your long-context language models?

arXiv preprint arXiv:2404.06654, 2024.

Hu, Y ., Liu, S., Yue, Y ., Zhang, G., Liu, B., Zhu, F., Lin, J.,

Guo, H., Dou, S., Xi, Z., et al. Memory in the age of ai

agents.arXiv preprint arXiv:2512.13564, 2025a.

Hu, Y ., Wang, Y ., and McAuley, J. Evaluating memory in

llm agents via incremental multi-turn interactions.arXiv

preprint arXiv:2507.05257, 2025b.Jimenez, C. E., Yang, J., Wettig, A., Yao, S., Pei, K., Press,

O., and Narasimhan, K. Swe-bench: Can language mod-

els resolve real-world github issues?arXiv preprint

arXiv:2310.06770, 2023.

Langley, P. Crafting papers on machine learning. In Langley,

P. (ed.),Proceedings of the 17th International Conference

on Machine Learning (ICML 2000), pp. 1207–1216, Stan-

ford, CA, 2000. Morgan Kaufmann.

Li, K., Shi, J., Xiao, Y ., Jiang, M., Sun, J., Wu, Y ., Xia,

S., Cai, X., Xu, T., Si, W., et al. Agencybench: Bench-

marking the frontiers of autonomous agents in 1m-token

real-world contexts.arXiv preprint arXiv:2601.11044,

2026a.

Li, X., Zhu, Z., Liu, S., Ma, Y ., Zang, Y ., Cao, Y .,

and Sun, A. Emembench: Interactive benchmarking

of episodic memory for vlm agents.arXiv preprint

arXiv:2601.16690, 2026b.

Liu, S., Liu, M., Zhou, H., Cui, Z., Zhou, Y ., Zhou, Y ., Fan,

W.

Agent Memory Benchmarking Frontiers

- The cited literature highlights a shift toward evaluating autonomous agents within extremely long context windows, reaching up to one million tokens in real-world scenarios.

- New benchmarks like Emembench and Agencybench are being developed to specifically test the episodic memory and reasoning capabilities of vision-language model agents.

- The conceptualization of Large Language Models as operating systems, exemplified by MemGPT, indicates a move toward more sophisticated memory management and retrieval architectures.